Benjamin M. Eidelson and Ian Lustick (2004)

VIR-POX: An Agent-Based Analysis of Smallpox Preparedness and Response Policy

Journal of Artificial Societies and Social Simulation

vol. 7, no. 3

<https://www.jasss.org/7/3/6.html>

To cite articles published in the Journal of Artificial Societies and Social Simulation, reference the above information and include paragraph numbers if necessary

Received: 01-Oct-2003 Accepted: 18-Apr-2004 Published: 30-Jun-2004

Abstract

Abstract

|

|

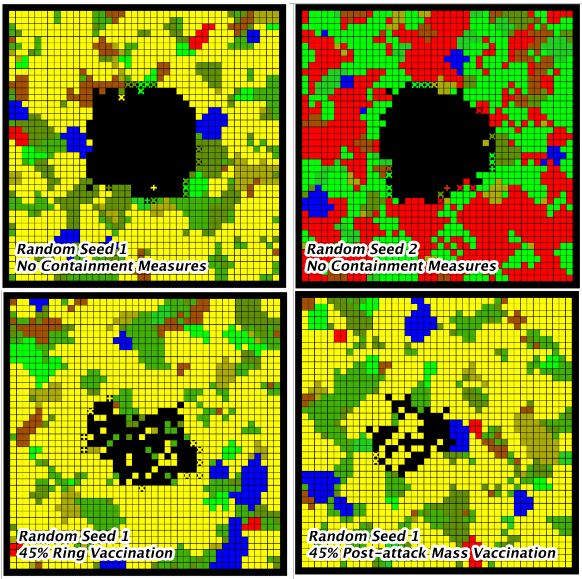

Figure 1. Four different snapshots of the VIR-POX model in operation Black cells represent agents that contracted the virus and subsequently became isolated from their social environment, either by death or by relocation to a medical facility. Cells marked with an "X" indicate infected and contagious agents that have not yet died or sought medical treatment. An agent's color indicates its currently activated identity. |

|

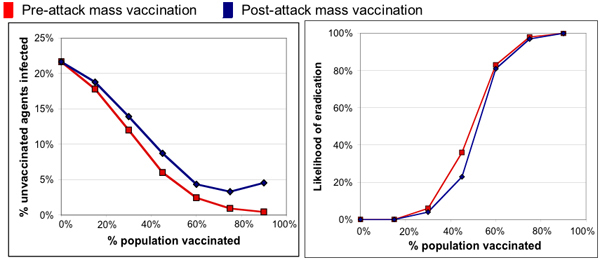

| Figure 2. Main effects of mass vaccination |

|

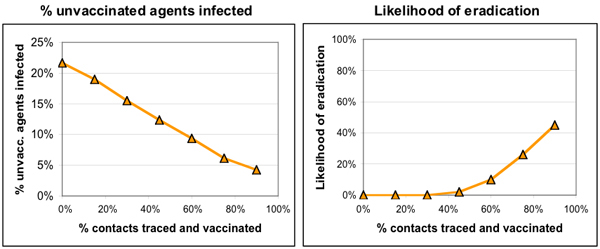

| Figure 3. Main effects of ring vaccination |

|

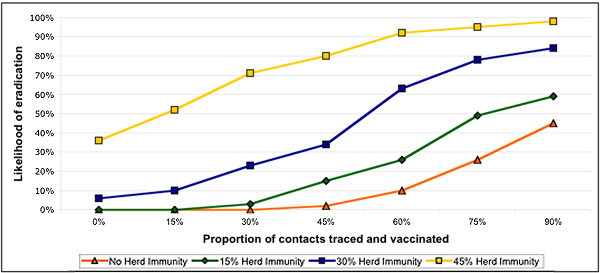

| Figure 4. Effect of herd immunity on ring vaccination |

|

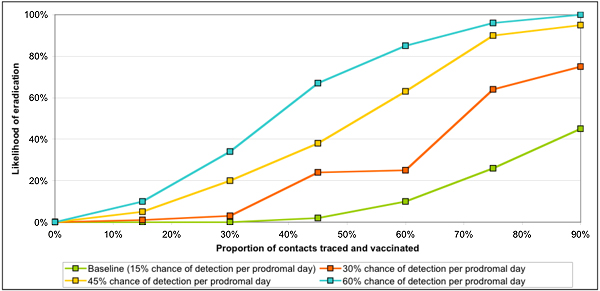

| Figure 5. Effect of early (prodromal) isolation on ring vaccination |

|

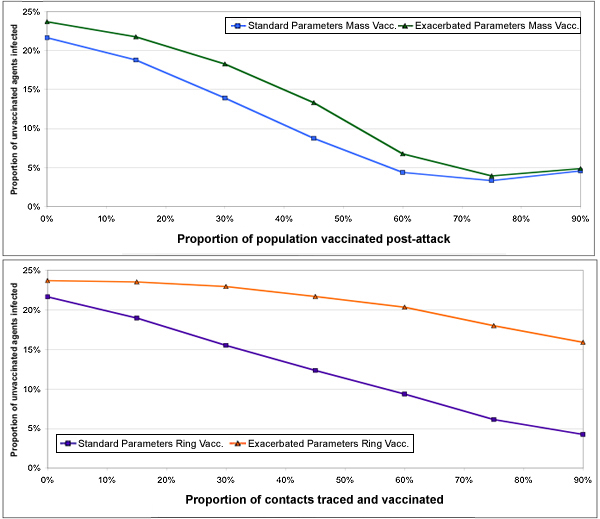

| Figure 6. Effectiveness of post-attack mass vaccination and ring vaccination under standard and exacerbated conditions |

2 For representative work deploying constructivist models of human identity and behavior in various disciplines see: Eley and Suny 1996; Kowert and Legro 1996; Nagel 1994; Verdery 1991; Abrams and Hogg 1990; Hogg and McGarty 1990; Stryker and Serpe 1982; and Huddy 2001.

3 The five-month timeframe is based on a conservative approach to available data. Of the last pre-eradication smallpox outbreaks, one of the longest had duration of 80 days from onset to resolution (Foege et al. 1975). We doubled this duration so as to gauge the effectiveness of response strategies that might take substantially longer than those employed earlier in the century, in part because of the probable lapse in herd immunity since smallpox was last confronted.

4 We considered including scenarios with multiple first-generation cases - i.e. in which more than one agent is infected at the outset. In this initial series of experiments, we chose not to explore the implications of such scenarios. In any event, when the matrix is understood as a social cross-section of a larger population, a single initial case does not necessarily indicate an attack that initially infects only one person; it merely suggests that only one of the 1,800 people monitored in the simulation was among those infected.

5 For the sake of transparency, this algorithm is fully explicated in the appendix.

6 For the purposes of our simulation model, “vaccination” refers to successful inoculation such that the recipient becomes fully immune to the smallpox virus. Accordingly, “failed takes” and the imperfection inherent in the vaccine might require that it be administered to some portion of the population greater than 50% in order to achieve the effect of our 50% vaccination scenario.

7 For a general overview of all of these issues, see Veenema 2002. For more specific information regarding the logistical difficulties entailed by post-attack vaccination, see Bicknell 2002, and regarding the incidences of various vaccine-related complications see Henderson et al. 1999.

8 Specifically, the possibility of detection and isolation during prodrome was removed, and the cumulative likelihood of detection during each day of the early rash phase was halved (D1 and D2 in the appendix, respectively).

9 The strength of the relationship between ring vaccination and early detection might also suggest that ring vaccination could become more feasible if contacts were isolated after vaccination, such that even those who were infected but could no longer be effectively vaccinated would not be able to infect others. This scenario was not examined in our initial set of experiments, and might be impossible to implement in reality anyway, but could possibly improve the efficacy of ring vaccination.

ALIBEK, Ken with Stephen HANDELMAN (1999) Biohazard New York: Random House, Inc.

ANDERSON, Roy M. and Robert M. MAY (1991) Infectious Diseases of Humans: Dynamics and Control New York: Oxford Science Publications,.

BICKNELL, William J. (2002) "The Case for Voluntary Smallpox Vaccination," New England Journal of Medicine, Vol. 346, no. 17 April.

BOZZETTE, Samuel. A., Rob Boer, Vibha Bhatnagar, Jennifer L. Brower, Emmett B. Keeler, Sally C. Morton, Michael A. Stoto (2003) "A Model for Smallpox Vaccination Policy," New England Journal of Medicine, Vol. 348, no. 5 (January).

CENTERS FOR DISEASE CONTROL and Prevention (2002) "Smallpox Fact Sheet" Atlanta, Georgia: Department of Health and Human Services, 2002. http://www.bt.cdc.gov/agent/smallpox (Accessed June 26 2003).

CENTERS FOR DISEASE CONTROL and Prevention (2002) "Smallpox Response Plan and Guidelines, Draft 3.0" Atlanta, Georgia: Department of Health and Human Services, 2002. http://www.bt.cdc.gov/agent/smallpox/response-plan/index.asp (Accessed June 26 2003).

ELEY, Geoff and Ronald Grigor Suny (1996) "Introduction: From the Moment of Social History to the Work of Cultural Representation," in Becoming National: A Reader, Geoff Eley and Ronald Grigor Suny, eds. New York: Oxford University Press, pp 3-37.

FOEGE, William H., J. Donald Millar, Donald A. Henderson (1975) "Smallpox eradication in West and Central Africa," Bulletin of the World Health Organization, Vol. 52, no. 2, pp. 209-222.

FAUCI, Anthony S. (2002) "Smallpox Vaccination Policy - The Need for Dialogue," New England Journal of Medicine, Vol. 346, no. 17 (April).

GANI, Raymond and Steve Leach (2001) "Transmission potential of smallpox in contemporary populations," Nature, Vol. 414, pp. 748-751 (December).

HALLORAN, M. Elizabeth , Ira M. Longini Jr., Azhar Nizam, Yang Yang, (2002) "Containing Bioterrorist Smallpox," Science, Vol. 298, pp. 1428-1432 (November).

HAMMARLUND, Erika, Matthew W. Lewis, Scott G. Hansen, Lisa I. Strelow, Jay A. Nelson, Gary J. Sexton, Jon M. Hanifin, Mark K. Slifka (2003) "Duration of antiviral immunity after smallpox vaccination," Nature Medicine, Vol. 9, no.9 pp. 1131-1137. (September).

HENDERSON, Donald A. (1998) "Bioterrorism as a public health threat," Emerging Infectious Diseases, Vol. 4, no. 3.

HENDERSON, Donald A. (1999) "The Looming Threat of Bioterrorism," Science, Vol. 283, no. 5406 (February).

HENDERSON, Donald A., Thomas V. Inglesby, John G. Bartlett, Michael S. Ascher, Edward Eitzen, Peter B. Jahrling, Jerome Hauer, Marcelle Layton, Joseph McDade, Michael T. Osterholm, Tara O'Toole, Gerald Parker, Trish Perl, Philip K. Russell, Kevin Tonat, (1999) "Smallpox as a Biological Weapon: Medical and Public Health Management," Journal of the American Medical Association, Vol. 281, no. 22 (June).

HOGG, Michael A., Craig McGarty, (1999) "Self-categorization and social identity," in Social Identity Theory: Constructive and Critical Advances, Dominic Abrams and Michael A. Hogg, eds. New York: Springer-Verlag, pp 1-27.

HUDDY, Leonie (2001) "From social to political identity: A critical examination of social identity theory," Political Psychology, Vol. 22, no. 1.

JOHNSON, Paul E. (1999) "Simulation Modeling in Political Science," American Behavioral Scientist, Vol. 24, no. 10.

KAPLAN, Edward H., David L. Craft, Lawrence M. Wein (2002) "Emergency response to a smallpox attack: The case for mass vaccination," Proceedings of the National Academy of Sciences, Vol. 99, no. 16 (August).

KOOPMAN, Jim (2002) "Controlling Smallpox," Science, Vol. 298, pp. 1342-1344 (November).

KOWERT, Paul, Jeffrey Legro (1996) "Norms, Identity, and Their Limits: A Theoretical Reprise," in The Culture of National Security: Norms and Identity in World Politics, Peter J. Katzenstein, ed. New York: Columbia University Press, pp. 451-97.

LUSTICK, Ian S. (2000) "Agent-Based Modelling of Collective Identity: Testing Constructivist Theory," Journal of Artificial Societies and Social Simulation, Vol. 3, no. 1 https://www.jasss.org/3/1/1.html.

MACK, Thomas (2003) "A Different View on Smallpox and Vaccination," New England Journal of Medicine, Vol. 348, no. 5 (January).

MACY, Michael W. and Robert Willer (2002) "From factors to actors: computational sociology and agent-based modeling," Annual Review of Sociology, 28: 143-166.

MELTZER, Martin I., Inger Damon, James W. LeDuc, J. Donald Millar (2001) "Modeling Potential Responses to Smallpox as a Bioterrorist Weapon," Emerging Infectious Diseases, Vol. 7, no. 6 (November-December).

MILLAR, J. Donald (2001) "It's bad policy to hold back smallpox vaccine," Milwaukee Journal Sentinel, December 27.

NAGEL, Joane (1994) "Constructing Ethnicity: Creating and Recreating Ethnic Identity and Culture," Social Problems, Vol. 41, no. 1 (February).

REYNOLDS, Craig W. (1987) "Flocks, Herds, and Schools: A Distributed Behavioral Model," Computer Graphics, Vol. 21, no. 4 (July).

STRYKER, Sheldon, R.T. Sterp (1982) "Commitment, identity salience, and role behavior," in Personality, roles, and social behavior, W. Ickes and E. S. Knowles, eds. New York: Springer-Verlag, pp. 199-218.

TANNER, Adam (Reuters) (2001) "Russian Germ Warfare Experts Raise Alarm," Clinical Infectious Diseases, Vol. 33, no. 12 (December).

TUCKER, Jonathan B. (2001) Scourge: The Once and Future Threat of Smallpox New York: Grove Press, 2001.

VEENEMA, Tener G. (2002) "The smallpox vaccine debate," American Journal of Nursing, Vol. 102, no. 9.

VERDERY, Katherine (1991) National Ideology Under Socialism Identity and Cultural Politics in Ceausescu's Romania Berkeley: University of California Press.

Return to Contents of this issue

© Copyright Journal of Artificial Societies and Social Simulation, [2004]